Clients with global customer bases often hesitate to use feature flags for specific use cases due to concerns regarding possible latency and slow response time. Feature flags let you launch new features and change your software configuration without (re)deploying code.

That's why fast response time is of great importance at ConfigCat. For context, ConfigCat is a developer-centric feature flag service with unlimited team size, awesome support, and a reasonable price tag.

To that end, ConfigCat provides data centers at numerous global locations to ensure high availability and fast response time all around the globe. These data centers are all equipped with multiple CDN nodes to guarantee proper redundancy and multiple layers of load balancing based on geolocation to achieve speed, throughput, reliability, and compliance. Thanks to a previous DDoS incident, ConfigCat also got the chance to test its infrastructure in real life and made preemptive security improvements.

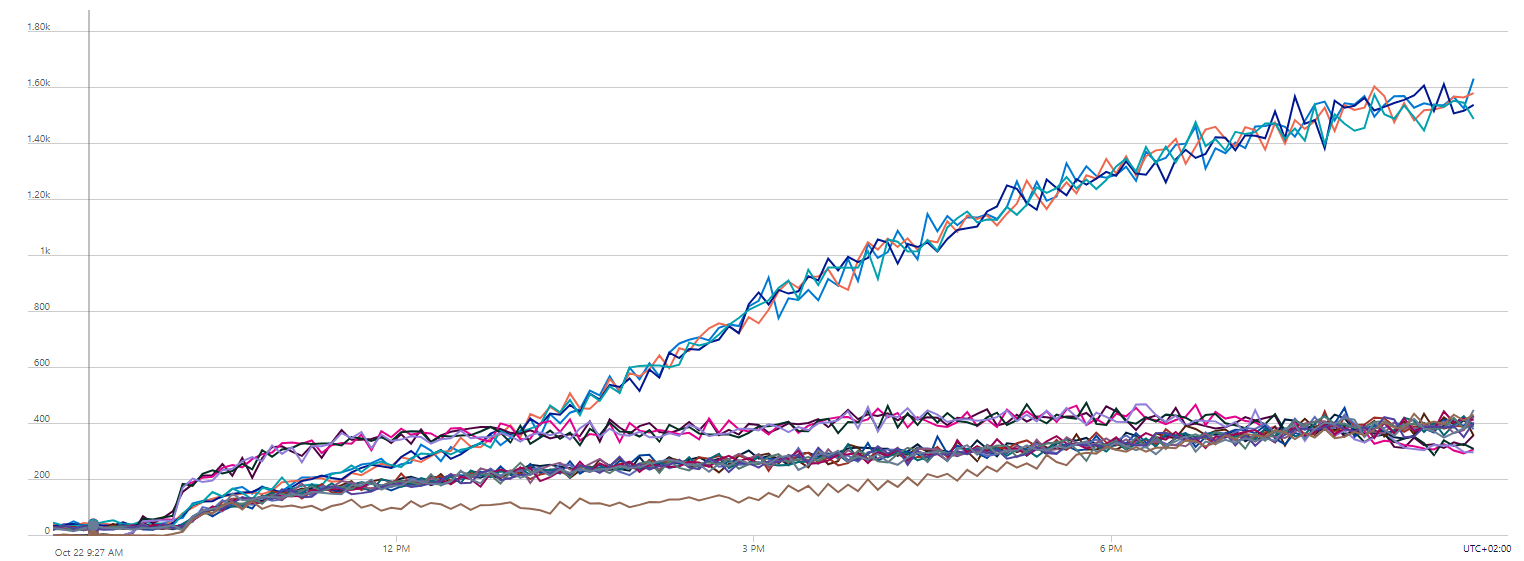

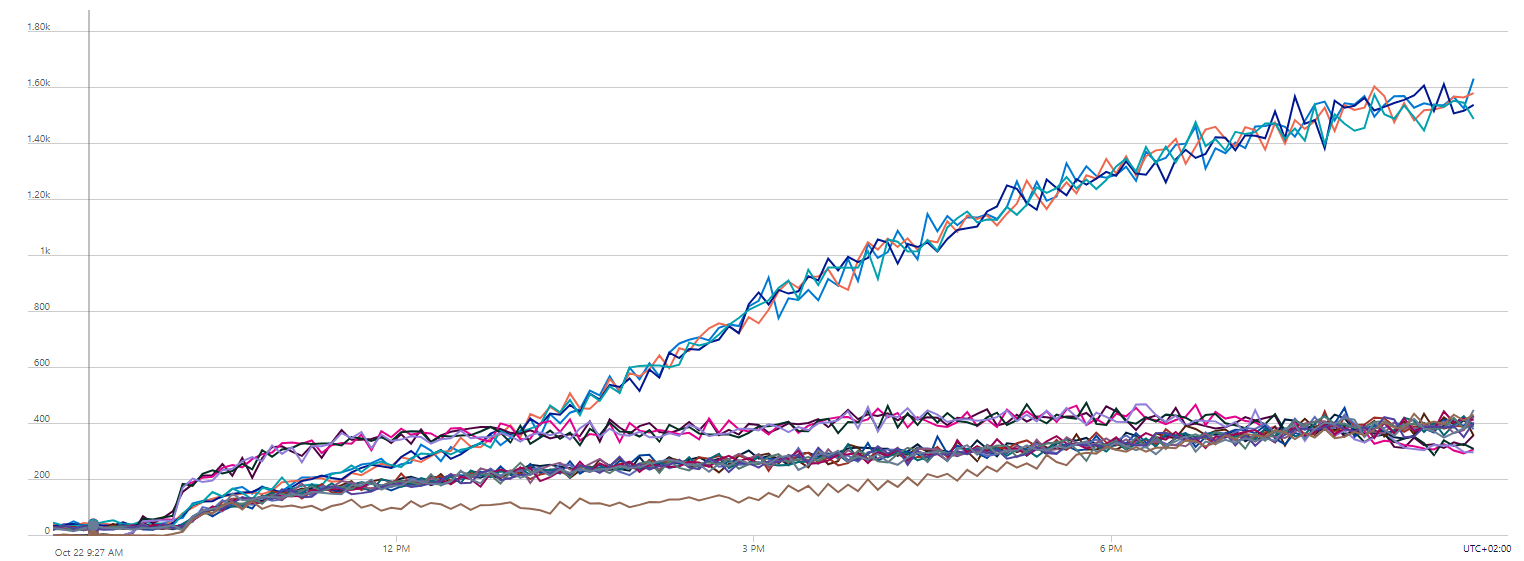

Using multi-layered load balancing helps ensure that ConfigCat can handle high traffic levels and provide fast, reliable service to users. Looking at the trend chart below, can you tell what this is? Can you recognize the multiple layers of load balancing working here?

The distinct groups of lines fluctuating around different trends in the figure above show traffic hitting ConfigCat's servers. ConfigCat has these servers distributed around the globe, and of course, behind a load balancer.

However, it's not just a simple load balancer but one that is there to achieve four contradictory goals simultaneously. Let's delve into the specifics of how this came about.

Our CDN was Designed with Four Goals in Mind

- Quick Response Times: Requests hitting ConfigCat's CDN should be replied to by the servers closest to the request's source.

- Unlimited Throughput: The ConfigCat CDN should handle any increase in requests by simply throwing additional servers at it.

- Resilience to Server Failures. ConfigCat's CDN should be able to tolerate if individual servers fail; other servers should step in and take over the load.

- Resilience to Provider Failures. In the event that one or two data centers are experiencing issues, the ConfigCat CDN should remain active (online).

However, these requirements (just like in any interesting engineering problem) are contradictory.

Our Solution to Solving These Challenges

Our approach involved utilizing multiple layers of load balancers and several providers.

- The load balancer in the top layer does geolocation-based routing to the second layer of load balancers.

- On the other hand, the second layer of load balancers ensures traffic is distributed equally across the various servers.

- Meanwhile, a watchdog checks the individual servers and feeds back their health status to the load balancers in the second layer.

- Finally, some redundant providers manage the servers in redundant data centers.

How Everything Works in Theory

The top layer load balancer achieves our first goal. Thanks to geo-location-based routing, requests will always hit the closest group of servers, hence, achieving Goal #1 ✅.

Requests hitting the second layer of load balancers will be distributed equally among CDN servers under their control. If there is an increase in the number of requests hitting a certain region of the world, we add new servers to the load balancer responsible for that region. Goal #2 ✅.

If an individual server dies, the watchdogs will recognize this and inform the load balancers not to send traffic there. That's how Goal #3 is achieved ✅.

Additionally, in regions where it is available, we have multiple groups of servers at different data centers in various cities managed by different providers. This adds an extra layer of reliability and tolerance to data center-related failures. It’s not that we expect the world to crumble anytime soon, but we understand that providers can go down and cities can vanish; hence we have built safeguards against such situations (Goal #4 ✅).

Regions, Cities, Datacenters

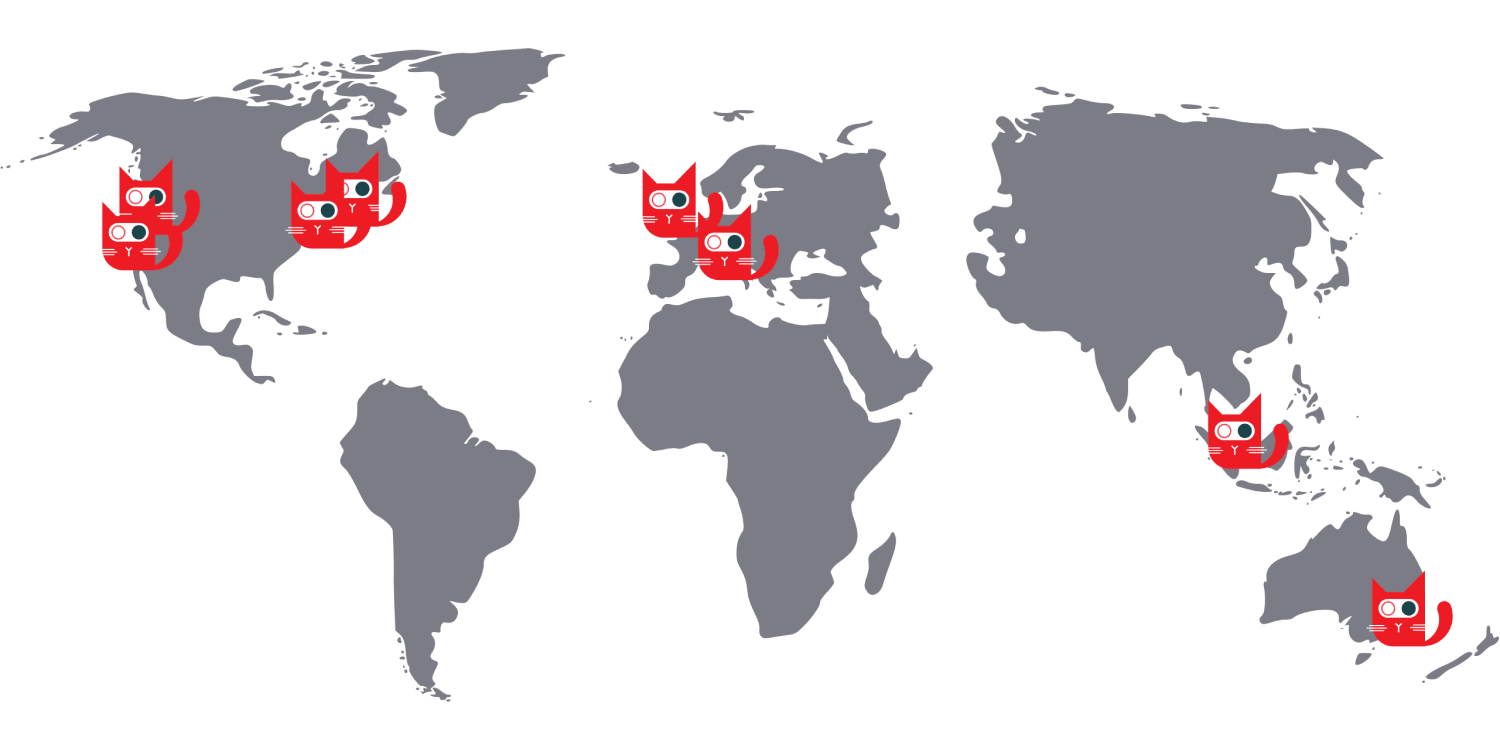

Let's take a closer look at the figure from earlier.

This map shows ConfigCat’s CDN network distributed across ten cities (some with multiple data centers) organized into five regions:

- West Coast

- East Coast

- Europe

- Singapore

- Australia

Bringing All the Pieces Together

Having understood how ConfigCat's CDN was designed and how the multiple layers of load balancers are implemented to ensure reliability and high availability, let's analyze the traffic trend chart from earlier to see how it all comes together.

Here's a breakdown of what is shown in the figure above:

- Each line represents traffic hitting an individual server.

- The groups of lines represent the traffic hitting a region.

- Lines in the same group move together because the load balancers in the second layer distribute the traffic roughly evenly across them.

- Lines in different groups follow different trends because of the difference in traffic trends throughout the globe.

What we see here in this chart is a visual representation of ConfigCat's global CDN network generating traffic in the four regions.

Why did I use the term "ConfigCat's global CDN network"? That's because ConfigCat now has multiple CDN networks distributed globally.

ConfigCat’s Multiple CDN Networks

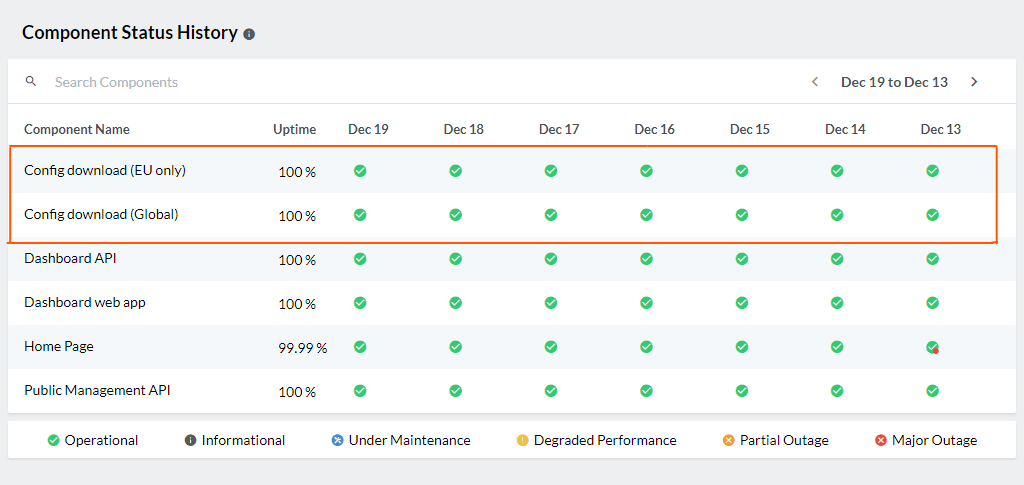

As stated earlier, ConfigCat has multiple CDN networks, and you can find a list of them and their availability status on the status page.

Each Network is made up of a number of regions, cities, data centers, and servers. Cool, but Why you might wonder? Why multiple CDN networks?

To support Data Governance.

- This allows you to tell us if you only want your data distributed within the EU; so you can easily stay GDPR compliant.

- This also allows you to tell us if you want your data distributed globally; so your users can get the lowest response times possible.

What's Next?

We're working towards adding new CDN networks. Again, this will allow you to have your data in the:

- US only,

- Australia only,

- Or Singapore only.

Key Takeaways

- ConfigCat has multiple CDN networks equipped with multiple layers of load balancers to achieve speed, throughput, reliability, and compliance.

- Configcat's CDN is designed to achieve four goals: fast response times, unlimited throughput, resilience to server failures, and resilience to provider failures.

- ConfigCat’s CDN network is distributed across ten cities (some with multiple data centers) organized into five regions: West Coast, East Coast, Europe, Singapore, and Australia

- Each layer of the load balancer serves a specific purpose and together they ensure ConfigCat has a fast response time and high availability.

You can follow ConfigCat on Twitter, Facebook, LinkedIn, and GitHub to stay up-to-date with the latest feature flagging developments.