ConfigCat's validation phase was a success in our eyes, so we had to step ahead. Above making a great product with great features we had to provide a really stable and reliable system.

In this phase we splitted our ASP.NET Core MVC web application into 6 different parts - Homepage, Dashboard, Dashboard API, Public Management API, Docs, Blog.

We also put effort to make our "config JSON" serving in a more reliable way.

Dashboard API

The validation phase was great in terms of knowing our software and our customers better.

We had a roadmap with ConfigCat's future features and we also got a lot of feedback, feature requests from our customers.

The best way to communicate with our customers was via our Community Slack.

These pre-steps were a really huge help when we designed our new API which only purpose was to serve our new Angular Frontend.

Integration testing

One great advantage of using ASP.NET Core was the really easy and handy integration testing ability with the TestServer implementation. We could test the real endpoints and after writing some well-aimed test helper classes we were able to test complex test scenarios easily.

[Theory]

[InlineData(null, HttpStatusCode.BadRequest, new[] { "The Name field is required." })]

[InlineData("Name", HttpStatusCode.OK, null)]

public async Task Test_Create(string name, HttpStatusCode expectedStatusCode, string[] expectedResponseMessageContains)

{

string accessToken = await testServer.CreateAndLoginAccountAsync();

var organizationId = await testServer.CreateOrganizationIdAsync(accessToken);

var productId = await testServer.CreateProductIdAsync(accessToken, organizationId);

await testServer.CreateEnvironmentAsync(accessToken, productId, name, new ResponseValidator

{

HttpStatusCode = expectedStatusCode,

ResponseMessageContains = expectedResponseMessageContains

});

}

Writing these kind of integration tests and writing a lot of them helped us in creating an easily understandable, handy API which was a future reference for our Public Management API.

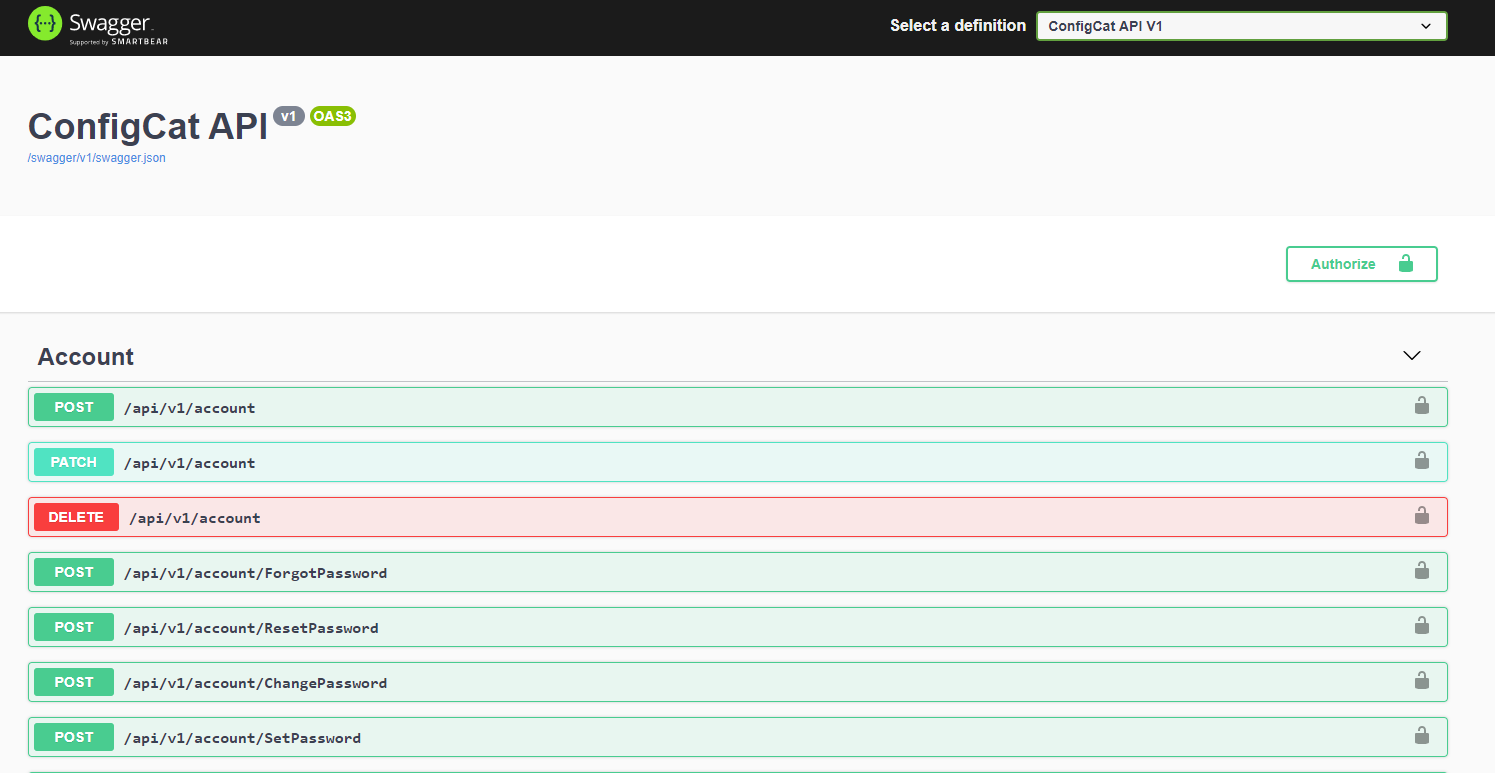

Swagger

While developing the API we wanted a fast and easy way to test the endpoints without any difficulty. Generating a Swagger documentation from our API was a great help during the API development and during the Angular Frontend development too.

Dashboard

Detaching our API from the old application was a quite long but easily understandable and managable task for us.

The really hard part was to decide which framework should we use for the frontend.

We have checked Angular, React and Vue.js and decided to go with an Angular frontend.

With a well-written API and some great layout plans in Figma it was just a matter of time (~2-3 months...).

Homepage, Docs, Blog

After a successfull brand name change from BetterConfig to ConfigCat and after receiving our Homepage's new desing with the cool Cat logo from JacsoMedia Smart Web Applications we started working on the homepage/blog/docs separation and rebranding.

We used our old ASP.NET Core MVC web application for serving the Homepage and we started using Docusaurus for the blog and documentations.

Later we migrated the Homepage's code from the ASP.NET Core MVC web app to a simple Angular web app, which is turned into almost pure HTML during our CI/CD with the help of Scully.

These steps helped a lot to score a higher number at the PageSpeed Insights tests and was a great step in the SEO optimization.

CDN improvement

As more and more customers started using ConfigCat actively, we decided to make our core component, the "config JSON" serving more resilient to failures.

We turned on our second virtual private server which was able to serve the "config JSON" files too with the help of some great rsync commands to synchronize the "config JSON" files from the primary server to the secondary server.

We used an Azure Traffic Manager with a priority routing method on the two nodes.

This was the point where we started to call this service "CDN". At ConfigCat it is more like an abbrevation for Config Delivery Network instead of Content Delivery Network.

This was just an initial step for making our CDN more resilient.

With introducing Docker and Docker swarm into our infrastructure later we made this task a lot more easier to maintain.

But we are still using our own CDN instead of the well known CDN providers. Our CDN network is the core component of ConfigCat, which we want to manage in full control. Cost-efficiency was also an important aspect in this decision.

CDN analytics

We wanted to get a glance at our CDN usage. We run some load tests with loader.io and we knew the limits of our 5 USD/month virtual private servers. We wanted to get notified if we reach the limits so we can put in more CDN nodes.

We had a POC with Elastic Stack which solved our infrastructure monitoring and CDN analytics purposes too for a while. It worked pretty well but the self-hosted Elastic Stack's infrastructure cost was the highest in all of our infrastructure.

Using the ELK Cloud infrastructure with our rapidly growing request count wasn't an option too.

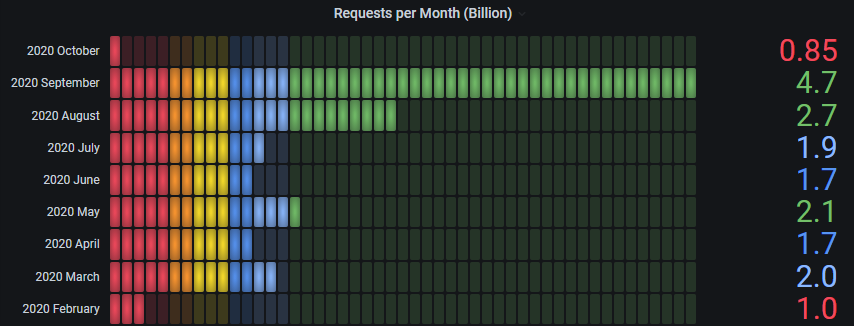

We decided to go with a custom Nginx access log parser to an own database with a Grafana Dashboard for the CDN analytics and later we started using Site24x7 for monitoring our whole infrastructure.

Last month we served over 4.7 billion "config JSON" requests with the help of some 5 USD/month virtual private servers.